The future of water operations is not a collection of copilots. It is a governed AI operational platform that can watch, remember, reason, and act through the utility stack under human authority.

Right now, most water utilities are under pressure to define an AI strategy. Boards are asking about it. Teams are experimenting with it. Vendors are selling it.

But inside the utility, the real problem is not whether AI matters. It is how to use it without creating a second generation of fragmentation, vendor dependence and operational risk.

That is why so many utilities feel stuck.

They know AI is important. They do not yet know how to transform the utility around it. They do not know which strategy will scale beyond isolated pilots.

And they have good reason to worry: the sector is at risk of repeating the same digital mistake it has already spent decades trying to correct.

The first wave of utility digitalization solved real problems. It also fragmented the operating environment. Each function got its own system, and each system its own data model, workflow logic, permissions, identifiers, and blind spots.

Utilities digitized work, but they also digitized silos.That became the real blocker to full transformation: not a lack of software, but a lack of shared operational context.

The old mistake is back with AI. This time, the cost of getting it wrong could be much higher. Adding language layers to each fragment of the stack may make individual tools easier to use. It does not create an operating model.

At 3 AM, during a pressure event or an outage, no operator needs another interface. They need one operating picture, safe options, clear checkpoints, and the right team member in the loop.

The breakthrough is architectural: a shared operational layer where intelligence lives in the platform itself, understanding intent, unifying data, reasoning over network reality, and moving teams from alarm to action as one system.

Qatium did not begin with the current AI cycle. Its roots run deeper: in the experience of our founders, Jaime Barba and Roberto Tórtola; in the transformation of Aguas de Valencia from within into one of the world’s most advanced utilities; in the creation of successful digital water companies; and, above all, in what we have learned working with 250+ utilities that already trust Qatium across 15 countries.

That vantage point makes one thing clear: the future of utility operations will not be built around standalone agents, but around the runtime that makes agentic AI safe, useful, and decision-grade in the real world.

That is what we have spent the last 5 years building: an open, water-native AI platform designed to deploy in days, work across the existing utility stack, understand the network, and orchestrate intelligence safely.

Only now are the underlying pieces ready for that architecture to fully come to life: capable models, tool use, orchestration runtimes, and simulation workflows. Our mission is to build the platform where that future can work.

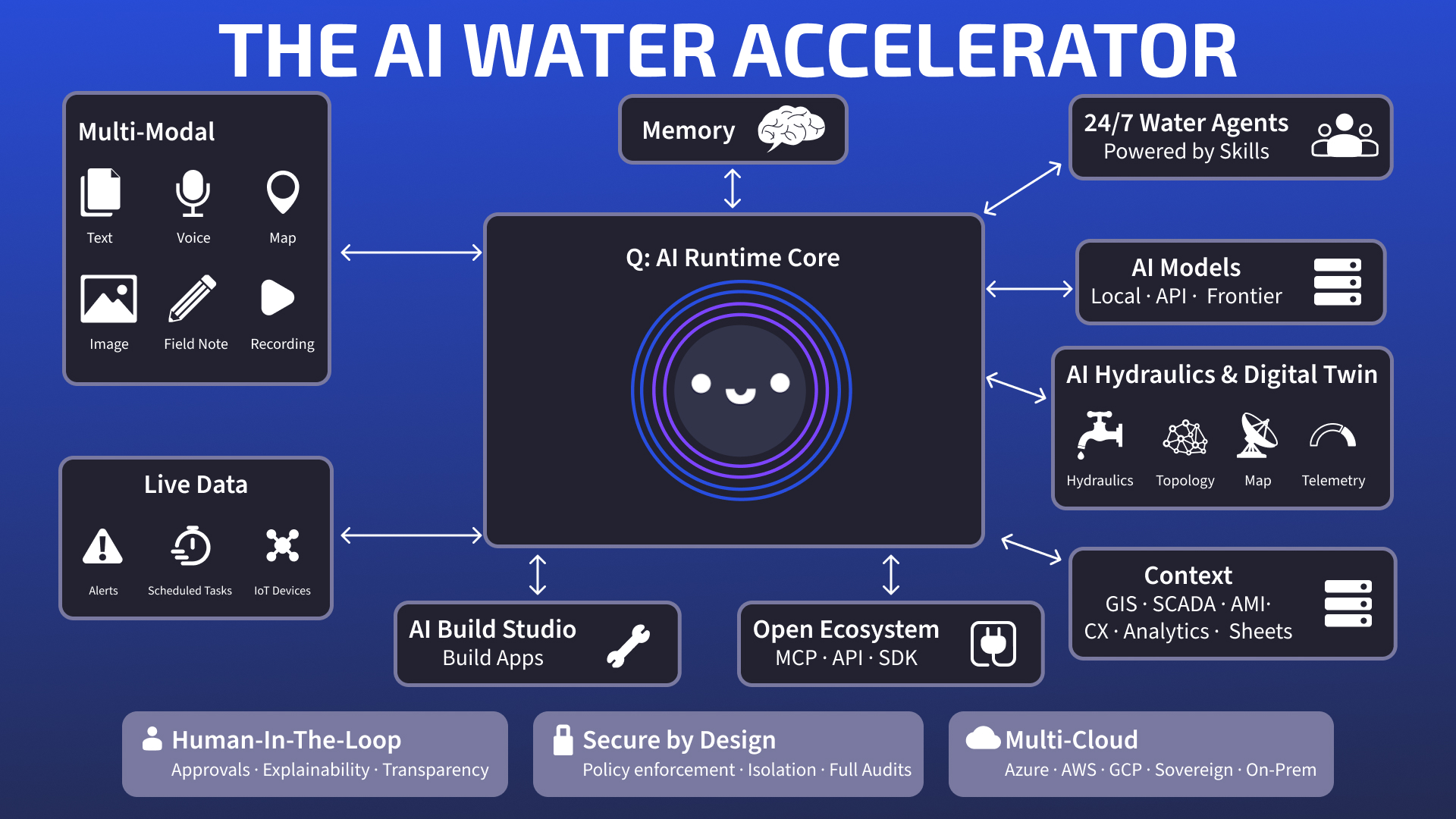

These are the core components of the open, progressively adoptable platform we are building toward, and the one we believe utilities will need to succeed.

Q sits at the center of the platform. It is not a chatbot or an assistant. It is the operational engine that governs how intelligence moves through the system.

Operations work is not a question. It is a sequence: understand the situation, assemble context, test safe options, and involve the right human before anything consequential happens.

Q runs that sequence: interpreting intent, retrieving context, classifying risk, selecting the allowed model path, activating agents and skills, deciding when to query systems, when to simulate, where human approval must enter, and how to keep operational state alive across incidents and shifts.

From there, the architecture has to solve 3 things well: how work enters, how context persists, and how action becomes reliable.

Water work does not arrive in one format. Operators type, speak, and share maps, images, recordings, and field notes. The platform has to meet that reality where it happens.

The deeper requirement is continuity across incidents, shifts, and recurring operational work. Not chat history, but operational memory: network context, user role, the incident in progress, approved assumptions, and the constraints shaping the next step.

Around Q sits a team of specialized agents for recurring operational work: incident triage, leakage and NRW, pressure and energy, planning, reporting, and coordination. Q can coordinate those agents directly, including delegated background work when the job has to be split across systems, teams, and time.

Below them sits the skills layer. Agents handle open-ended reasoning. Skills handle bounded, repeatable execution.

No single AI model fits every task.

Water operations demand different trade-offs across sensitivity, latency, cost, and governance. Utilities need deployment choice: local models, external models through their own API keys and contracts, or frontier models under controlled conditions.

That choice is not a technical detail. It is what makes the right intelligence usable for each operational problem. Model choice is part of the architecture, not a vendor bet.

Utilities already run GIS, SCADA, AMI, CMMS, customer systems, analytics tools, and spreadsheets. The answer is not rip-and-replace. The platform has to sit above that stack, preserve prior investment, and turn fragmented systems into one operational context.

That is what Qatium’s data backbone does. It normalizes 100+ source patterns into a canonical representation of network entities, telemetry, and operational context. Without that substrate, agents reason over silos. With it, they reason over the utility.

Better AI in water depends on working through the tools utilities already use every day. Those systems remain systems of record, but their data and actions must feed the orchestration layer. MCP-style connectivity links models to tools and resources. The platform adds the policy, permissions, and runtime control that make those connections operational.

This is where the platform meets network reality.

The network is understood as a hydraulic model, geospatial context, operational graph, and live data environment at once. Assets, telemetry, topology, pressure, isolation boundaries, service impact, and scenario limits are brought together in one deterministic representation of network behavior, strengthened by AI surrogate models for speed and better inference under imperfect information.

This is not AI instead of physics.

It is AI-native hydraulic intelligence with physics verification. Deterministic models remain the trust backbone. Surrogate models add speed under uncertainty. Together, they move the digital twin into the operating loop.

In the current geopolitical environment, utilities are under growing pressure to control dependencies, data, cyber risk, and where inference runs. Water is critical infrastructure. Security cannot be a wrapper added later.

That starts with data sovereignty: utility data must remain under utility control, inside approved boundaries, with no cross-tenant access, no reuse outside policy, and no third-party access unless explicitly authorized. In the most sensitive cases, that extends to fully isolated, air-gapped deployments.

Sovereignty also applies to infrastructure: utilities may need to deploy on Azure, AWS, GCP, other cloud providers, private instances, local environments, national or sovereign clouds, or fully on-prem, because those choices determine where data, inference, memory, tool execution, and operational control actually live.

From there, the controls have to hold at runtime: policy enforcement, least privilege, per-utility isolation, permission-scoped tools, approval gates, and full audit trails. Trust is not a branding claim. It is an evidence system.

And human authority has to be explicit: which human, under which authority, at which checkpoint. An operator approves a maneuver. A planner signs off on an outage scenario. A manager authorizes an external report.

This architecture does more than improve workflows. It changes how operational work starts, moves, and stays under control across the utility.

Instead of waiting for a prompt, the platform can watch events, run scheduled checks, keep incident state alive across shifts, assemble context, open the right workflow, delegate background analysis, test safe options, and bring ranked actions or approved low-risk execution to the right person.

For the operator, that means less time chasing systems and reconstructing context, and more time to make sound decisions under pressure.

No vendor can cover the full problem space of a water utility alone.

Utilities need room for in-house development, partners, other vendors, research collaboration, and local workflows. In water, closed platforms do not just limit choice. They create lock-in, slow learning, and slow response.

In most utility software, the app comes first and the platform is inferred later. We take the opposite view: open platform first, apps second.

For us, that is not a slogan. It is the discipline required to avoid repeating the last software mistake: fragmenting operations into disconnected tools and trying to stitch coherence back together afterwards.

That is the model we want for Qatium: a shared platform where utilities, partners, other vendors, developers, and researchers can build as first-class participants on top of the same governed primitives, without fragmenting the system again.

That is the model we want for Qatium: a shared platform where utilities, partners, developers, researchers, and other vendors can build as first-class participants on top of the same governed primitives, without fragmenting the system again.

We recently launched in preview a new open platform capability: creating new tools and workflows on top of Qatium using plain language, without needing to code.

Partners and developers had already been extending Qatium this way through our SDK and, more recently, through MCP-connected tools such as Claude Code and Codex.

Build Studio shortens that path. Once the platform sits inside the utility’s trust, policy, and security boundaries, authorized teams can describe what they need, test it, and launch it on top of the same governed building blocks.

For the operator, that means less waiting and a faster path from operational need to a working tool. The point is not that AI can write code. It is that operational needs can become working software fast enough to matter.

The future of AI in water will not be defined by copilots, but by platforms that utilities can govern, trust, and build on. Platforms that can carry context, hydraulics, workflow, security, and human judgment in the same system.

That is the future we at Qatium want to build with utilities ready to move first. And no matter where you are on the journey, Qatium can help accelerate the path.

Water utilities do not need just more AI.

They need better AI.

Author

Manu Arianoff

Originally published on LinkedIn on April 9, 2026, this article is republished here.

Open Water 2.0:

Open platforms, Marketplaces & Community

Open Water 2.0 builds on the foundation of our first Open Water whitepaper, which explored the value of open data, open-source software, and open collaboration in the water sector. In this paper, we introduce three new critical drivers to the Open Water approach: Open platforms, Digital marketplaces and Communities in motion.

Your cart is currently empty, go to the Marketplace to shop plugins

View MarketplaceFill out this form with the details of the person you’d like to share your cart with.

Tax and fees added at checkout