AI Deep Dive: How has AI changed, and what does that mean for the water sector?

This article was taken from the paper “AI & Water management — What utilities need to know now.” You’ll find the full paper here.

If you want to understand the opportunities and barriers to the water industry’s use of AI, it’s helpful to understand how AI works, how it’s recently changed, and where we are now with the technology (and why that matters).

First, “Artificial Intelligence (AI)” is a broad term that encompasses different fields and applications. Some broadly define AI as a computer’s ability to mimic human learning and problem solving. Others think of AI as a branch of science at the intersection of computer science and neurology, because you need to understand how humans think to have a computer mimic the human thinking process.

Today, most AIs are Artificial Narrow Intelligence (ANI), also called “Narrow AI”, because they focus solely on solving a specific task or problem. For example, in the water sector we use computer vision, a field of AI that analyzes images and video, dramatically speeding up an existing process where people inspect footage from sewer Close-Circuit Television (CCTV) cameras to identify defects in pipes. Computer vision for this purpose could be considered a narrow AI because it just needs to be able to tell the difference between a clean pipe, a pipe with a defect, or something in a pipe, such as a rag or an animal. It doesn’t have to be too smart; it’s intelligence is relatively narrow. And that’s more or less where we were until today with AI—most AI applications employ artificial narrow intelligence.

CCTV image showing raccoon in pipe

We’re slowly getting into what’s called Artificial General Intelligence (AGI), sometimes referred to as “General AI.” AGI refers to AI systems that are capable of performing a wide range of intellectual tasks that are typically associated with human intelligence, such as reasoning, problem-solving, learning, and perception.

While there has been significant progress in the development of AI systems in recent years, we are only beginning to achieve true AGI. Some argue that chatbots and self-driving autonomous vehicles are AGIs because they’re programmed to respond to stimuli, in contrast to narrow AI, which only has to flag something that doesn’t fit a pattern and alert a human to intervene.

If an autonomous self-driving vehicle, for example, sees a wounded person in the middle of the road, it has to decide to do something, and its decision is mission critical. While driving around the wounded person may be a solution, it is not the best one. At the same time, the AGI wouldn’t be an AGI if all it knew how to do was alert someone and ask, what do I do now? It has to recognize and deal with the problem immediately by stopping, swerving out of the way, or some other response. It’s vital to the vehicle functioning successfully, and if the AI fails, it adversely affects society.

Artificial Super Intelligence (ASI), also referred to as “Super AI”, comes after AGI. This is the kind of AI you may choose to worry about, but you don’t have to worry about it now, because we’re not near that kind of artificial intelligence. Super AI is largely a hypothetical future form of artificial intelligence that’s transcendental. It surpasses human intelligence, it’s self-aware, and it can think on its own. There’s no real-world example of super AI, but you can look to Skynet from the beloved Terminator franchise if you want to consider the potential power and risks of a fictional ASI. Again, we’re nowhere near this kind of AI, and we may not achieve it (or want to do so).

We can’t talk about AI’s progress in the water industry, or at large, without talking about machine learning. AI learns through machine learning. There are many types of machine learning, but today the most popular is artificial neural networks (ANN), a process that tries to imitate the way humans learn. ANN are layers of linked processing nodes that simulate the way the human brain works. The most basic ANN requires only a few layers of processing nodes (small ANN). This kind of AI is considered “non-deep” and it’s limited because it requires human intervention to learn from its mistakes.

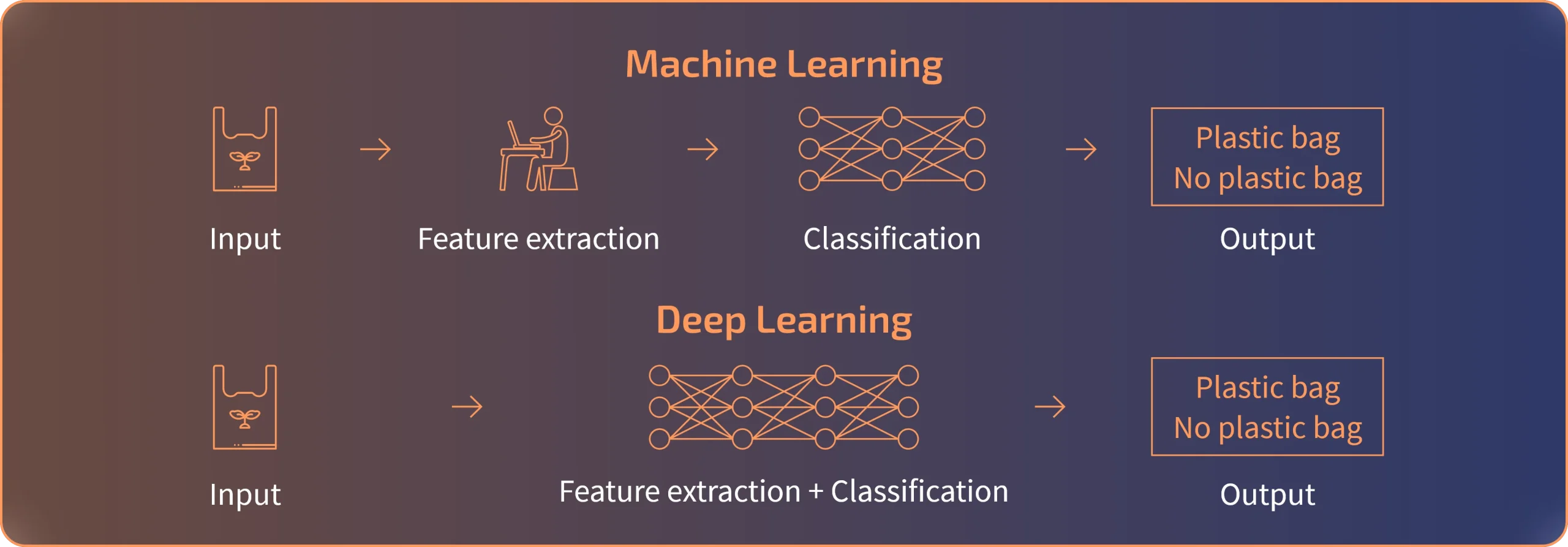

Machine Learning (ML) versus Deep Learning (DL)

As an example, in the water sector we use computer vision to drastically speed up an existing process where people inspect CCTV footage from sewer pipes to identify pipe defects. Now, let’s say an AI based on a basic ANN model is “in training” to identify defects in pipes. This “non-deep” AI can improve by asking its human supervisor to categorize footage that it cannot categorize, such as a plastic bag in a pipe. It might see a plastic bag, for example, and it will ask its human supervisor, what is this? The human will then code the thing as a “floatable debris.” As the AI learns, it may continue to ask its human supervisor for codes when it sees different floatables. But eventually, the AI will see enough floatables that it stops asking for support, because it learned how to recognize a plastic bag without further human intervention.

These days, however, we increasingly have AI that uses more advanced ANN. This is an advanced type of machine learning that’s called deep learning. And unlike classical machine learning, deep learning doesn’t require human intervention to learn. It has the ability to improve itself by learning from its own mistakes. But here’s the thing: deep learning requires vast amounts of training data, processing power, and time to learn in order to produce accurate results. Newer models of sewer AI use deep learning.

The point is, artificial intelligence must first learn by using machine learning, whether the AI is based on a basic ANN model or a deep learning model. And as it learns, it will make mistakes, and learn from its mistakes, with human intervention or without depending on the kind of machine learning the AI employs.

But unlike humans, when an AI makes a mistake and that mistake is corrected, it should never make that same mistake again. And that’s important when considering the opportunities the water sector faces with developing and adopting AI solutions.

Because humans get tired. They get sick. They forget. They retire and take their hard-earned experience with them. Humans don’t have the ability to transfer the experience to the next “version” of the operator, while an AI does this by default.

New operators will continue to make mistakes in each generation until each generation gains the experience, but AI will not after it learns from its mistakes. And that represents an opportunity in the water sector because if we allow these AI solutions to learn from their mistakes and increasingly get better, that improved knowledge through collaboration with humans can expand to all AI in the water industry and beyond.

Even though computers are now fast enough and there’s more and more data to employ deep learning AI in the water sector that will drastically change how the industry operates, the industry faces unique barriers to adopting new technology. First, the water industry is strongly regulatory driven instead of profit driven. Second, the sector deals with a resource that sustains human life and health and for this reason it is risk averse. Those two characteristics will need to be overcome to facilitate the adoption of AI in the water sector.

First, regulatory pressures have a strong impact on the technology our sector adopts. For example, hydraulic models used to be very skeletonized, but when the US Environmental Protection Agency (EPA) mandated that utilities monitor chlorine residuals in systems, and gave utilities the option of substituting model results for field observations, suddenly, all-pipes modeling technology exploded, which then also became beneficial in other ways.

We don’t know what regulations will emerge that may push the sector towards adopting more AI solutions. For example, there could be requirements for utilities to optimize their massive energy costs. The EPA estimates drinking water and wastewater systems use two percent of overall energy use in the US, generating 45 million tons of greenhouse gasses annually. Regulations requiring utilities to optimize their energy use may drive the sector to adopt AI technologies faster, because those AI technologies can help solve energy use challenges.

Second, AI can and will make mistakes, and that fact bumps up against the water utility sector’s aversion to risk. That means instead of the water sector allowing AI to jump in and make decisions at the speed we’ve seen with self-driving cars, for example, the sector will likely take a slower approach. It will probably be more like a collaboration between AI and humans in the water sector, beginning with AI making suggestions and providing decision support.

Instead of full self-driving cars, water AI will provide a smooth cruise control that will also provide suggestions to operators that could optimize decision making.

That’s where “digital twins” come into play. In the water industry, a digital twin is a digital replica of utility assets and performance, like a water system network. A digital twin can employ machine learning applications, so engineers and operators can test the outcome of decisions before making those decisions in the real world.

Eventually though, our sector will get comfortable with AI suggestions to let it make more and more impactful decisions. We already have sensors turning pumps on and off, for example. With AI, we can be smarter when pump energy is used. And that doesn’t necessarily mean utility jobs will disappear. Instead utility operators who lack time and resources will hand off tedious tasks to an AI they trust, so they can better focus on other aspects of their heavy workloads that require human intelligence.

Qatium is co-created with experts and thought leaders from the water industry. We create content to help utilities of all sizes to face current & future challenges.

Saša Tomić, Digital Water Lead at Burns & McDonnell, is a Qatium advisor.

Open Water 2.0:

Open platforms, Marketplaces & Community

Open Water 2.0 builds on the foundation of our first Open Water whitepaper, which explored the value of open data, open-source software, and open collaboration in the water sector. In this paper, we introduce three new critical drivers to the Open Water approach: Open platforms, Digital marketplaces and Communities in motion.

Your cart is currently empty, go to the Marketplace to shop plugins

View MarketplaceFill out this form with the details of the person you’d like to share your cart with.

Tax and fees added at checkout